Sorry to disappoint, but if you’re looking for a quick list of easily identifiable and foolproof ways for detecting AI-generated videos, you’re not going to find it here. Gone are the days of AI Will Smith grotesquely eating spaghetti. Now we have tools that can create convincing, photorealistic videos with a few clicks.

Right now, AI-generated videos are still a relatively nascent modality compared to AI-generated text, images, and audio, because getting all the details right is a challenge that requires a lot of high quality data. “But there’s no fundamental obstacle to getting higher quality data,” only labor-intensive work, said Siwei Lyu, a professor of computer science and engineering at University at Buffalo SUNY.

That means you can expect AI-generated videos to get way better, very soon, and do away with the telltale artifacts — flaws or inaccuracies — like morphing faces and shape-shifting objects that mark current AI creations. The key to identifying AI-generated videos (or any AI modality), then, lies in AI literacy. “Understanding that [AI technologies] are growing and having that core idea of ‘something I’m seeing could be generated by AI,’ is more important than, say, individual cues,” said Lyu, who is the director of UB’s Media Forensic Lab.

Navigating the AI slop-infested web requires using your online savvy and good judgment to recognize when something might be off. It’s your best defense against being duped by AI deepfakes, disinformation, or just low-quality junk. It’s a hard skill to develop, because every aspect of the online world fights against it in a bid for your attention. But the good news is, it’s possible to fine-tune your AI detection instincts.

“By studying [AI-generated images], we think people can improve their AI literacy,” said Negar Kamali, an AI research scientist at Northwestern University’s Kellogg School of Management, who co-authored a guide to identifying AI-generated images. “Even if I don’t see any artifacts [indicating AI-generation], my brain immediately thinks, ‘Oh, something is off,'” added Kamali, who has studied thousands of AI-generated images. “Even if I don’t find the artifact, I cannot say for sure that it’s real, and that’s what we want.”

What to look out for: Imposter videos vs. text-to-image videos

Before we can get into identifying AI-generated videos, we have to distinguish the different types. AI-generated videos are generally divided into two different categories: Imposter videos and videos generated by a text-to-image diffusion model.

Imposter videos

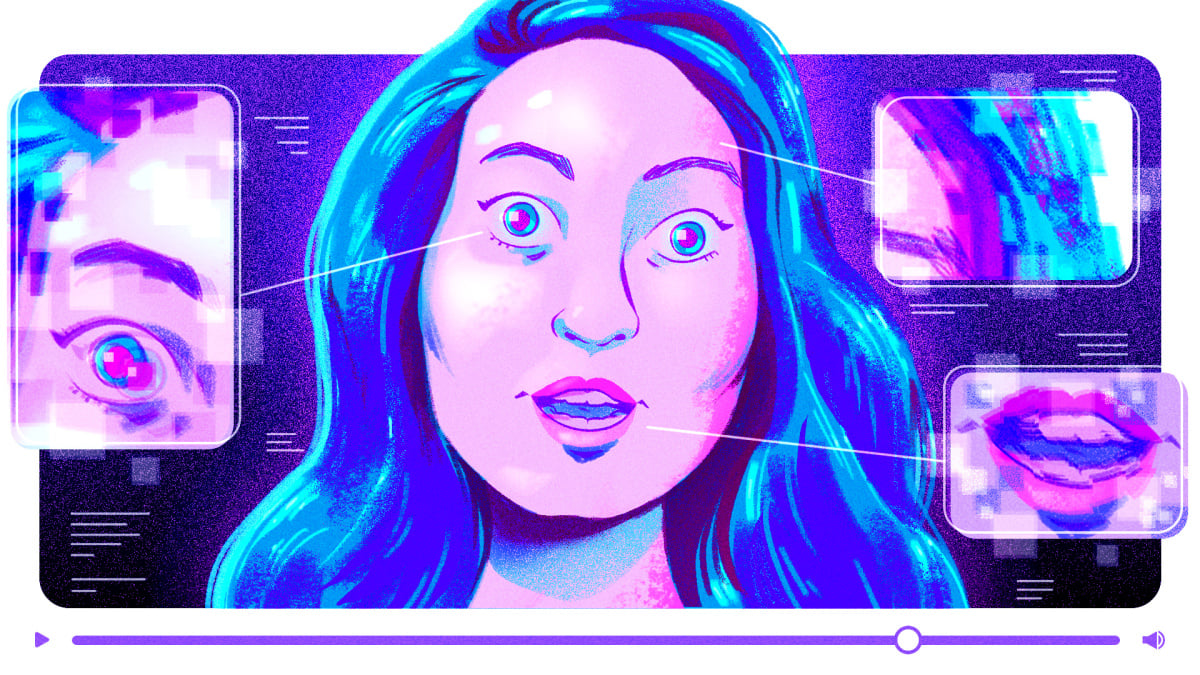

These are AI-edited videos that consist of face swapping — where a person’s entire face is swapped out for someone else’s (usually a celebrity or politician) and made to say something fake — and lip syncing — where a person’s mouth is subtly manipulated and replaced with different audio.

Imposter videos are generally pretty convincing, because the technology has been around longer and they build off of existing footage instead of generating something from scratch. Remember those Tom Cruise deepfake videos from a few years ago that went viral for being so convincing? They worked because the creator, Chris Ume, looked a lot like Tom Cruise, worked with a professional Tom Cruise impersonator, and did lots of minute editing, according to an interview with Ume from The Verge. These days, there are an abundance of apps out there that accomplish the same thing and can even — terrifyingly — include audio from a short sound bite that the creator finds online.

That said, there are some things to look for if you suspect an AI video deepfake. First of all, look at the format of the video. AI video deepfakes are typically “shot” in a talking-head format, where you can just see the heads and shoulders of the speaker, with their arms out of view (more on that in a minute).

To identify face swaps, look for flaws or artifacts around the boundaries of the face. “You typically see artifacts when the head moves obliquely to camera,” said digital forensics expert and UC Berkeley Professor of Computer Science Hany Farid. As for the arms and hands, “If the hand moves, or something occludes the face, [the image] will glitch a little bit,” Farid continued. And watch the arms and body for natural movements. “If all you’re seeing is this,” — on our Zoom call, Farid keeps his arms stiff and by his sides — “and the person’s not moving at all, it’s fake.”

If you suspect a lip sync, focus your attention on the subject’s mouth — especially the teeth. With fakes, “We have seen people who have irregularly shaped teeth,” or the number of teeth change throughout the video, said Lyu. Another strange sign to look out for is “wobbling of the lower half” of the face, said Lyu. “There’s a technical procedure where you have to exactly match that person’s face,” he said. “As I’m talking, I’m moving my face a lot, and that alignment, if you got just a little bit of imprecision there, human eyes are able to tell.” This gives the bottom half of the face a more liquid, rubbery effect.

When it comes to AI deepfakes, Aruna Sankaranarayanan, a Research Assistant at MIT Computer Science and Artificial Intelligence Laboratory, says her biggest concern isn’t deepfakes of the most famous politicians in the world like Donald Trump or Joe Biden, but of important figures who may not be as well known. “Fabrication coming from them, distorting certain facts, when you don’t know what they look like or sound like most of the time, that’s really hard to disprove,” said Sankaranarayanan, whose work focuses on political deepfakes. Again, this is when AI literacy comes into play; videos like these require some research to verify or debunk.

Text-to-image videos

Then there are the sexy newcomers: the text-to-image diffusion models which generate videos from text or image prompts. OpenAI made a big splash when it announced Sora, its AI video generator. Although it’s not available yet, the demo videos were enough to astonish people with their meticulous detail, vivid photorealism, and smooth tracking, all allegedly from simple text prompts.

Since then, a bunch of other apps have popped up that can transform your favorite memes into GIFs and imaginative scenes that look like they took an entire CGI team with a Disney budget. Hollywood creatives are right to be outraged about the advent of text-to-image models, which likely trained on their work and now threaten to replace it.

But the tech isn’t quite there yet, because even those Sora videos likely required some slick and time-intensive editing. Sora’s demo videos consist of a series of quick edits, because the technology isn’t good enough yet to create longer videos that are flawless. So you can be alert, especially, to short clips: “If the video is 10 seconds long, be suspicious. There’s a reason why it’s short,” said Farid. “Basically, text-to-video just can’t do a single cut that’s a minute long,” he continued, while adding that this is likely to improve in the next six months.

Farid also said to look out for “temporal inconsistencies,” such as “the building added a story, or the car changed colors, things that are physically not possible,” he said. “And often it’s away from the center of attention that where that’s happening.” So, hone in on the background details. You might see unnaturally smooth or warped objects, or a person’s size change as they walk around a building, said Lyu.

Kamali says to look for “sociocultural implausibilities” or context clues where the reality of the situation doesn’t seem plausible. “You don’t immediately see the telltales, but you feel that something is off — like an image of Biden and Obama wearing pink suits,” or the Pope in a Balenciaga puffer jacket.

Context clues aside, the existence of artifacts is likely to decrease really soon. And Wall Street is willing to bet billions of dollars on it. (That said, venture capitalism isn’t really known for reasonably priced valuations of tech startups based on sound evidence of profitability.)

The artifacts may change, but good judgment remains.

As Farid told Mashable, “Come talk to me in six months, and the story will have changed.” So, relying on certain cues to verify whether a video is AI-generated could get you into trouble.

Lyu’s 2018 paper about detecting AI-generated videos because the subjects didn’t blink properly was widely publicized in the AI community. As a result, people started looking for eye-blinking defects, but as the technology progressed, so did more natural blinks. “People started to think if there’s a good eye blinking, it must not be a deepfake and that’s the danger,” said Lyu. “We actually want to raise awareness but not latch on particular artifacts, because the artifacts are going to be amended.”

Building the awareness that something might be AI-generated will “trigger a whole sequence of action,” said Lyu. “Check, who’s sharing this? Is this person reliable? Are there any other sources correlating on the same story, and has this been verified by some other means? I think those are the things the most effective counter measures for deepfakes.”

For Farid, identifying AI-generated videos and misleading deepfakes starts with where you source your information. Take the AI-generated images that circulated on social media in the aftermath of Hurricane Helene and Hurricane Milton. Most of them were pretty obviously fake, but they still had an emotional affect on people. “Even when these things are not very good, it doesn’t mean that they don’t penetrate, it doesn’t mean that it doesn’t sort of impact the way people absorb information,” he said.

Be cautious about getting your news from social media. “If the image feels like clickbait, it is clickbait,” said Farid before adding it all comes down to media literacy. Think about who posted the video and why it was created. “You can’t just look at something on Twitter and being like, ‘Oh, that must be true, let me share it.'”

If you’re suspicious about AI-generated content, check other sources to see if they’re also sharing it, and if it all looks the same. As Lyu says, “a deepfake only looks real from one angle.” Search for other angles of the instance in question. Farid recommends sites like Snopes and Politifact, which debunk misinformation and disinformation. As we all continue to navigate the rapidly changing AI landscape, it’s going to be crucial to do the work — and trust your gut.

https://mashable.com/article/how-identify-ai-generated-videos